Every developer using Claude Code hits the same wall within a week.

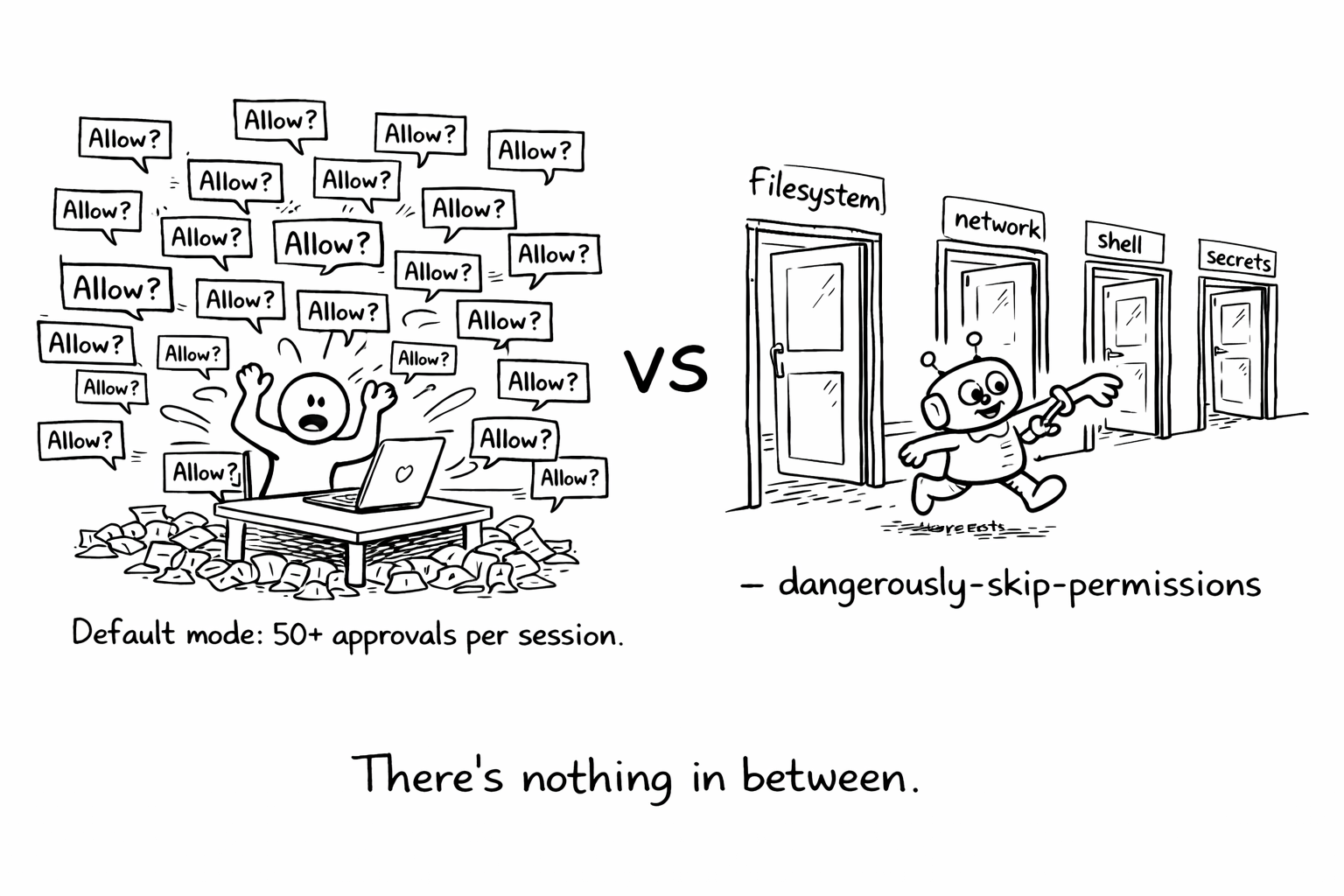

You're deep in a refactor. Claude is rewriting a module, running tests, fixing imports. It's working. Then the permission prompt appears. "Allow bash command: npm test?" You approve. Ten seconds later, another one. "Allow file edit: src/utils/parser.ts?" Approve. Again. Again. Fifty approvals into a session, your flow state is gone and you've context-switched so many times that you've lost track of what Claude was even doing.

So you do what every developer eventually does. You pass --dangerously-skip-permissions.

And now Claude has full, unrestricted access to your filesystem, your network, your shell. Every subagent inherits the same access. No confirmation. No audit trail. No way to scope what it can touch.

This isn't a developer discipline problem. It's a design problem. The permission model offers two modes: constant interruption or total trust. There's nothing in between.

The actual threat model

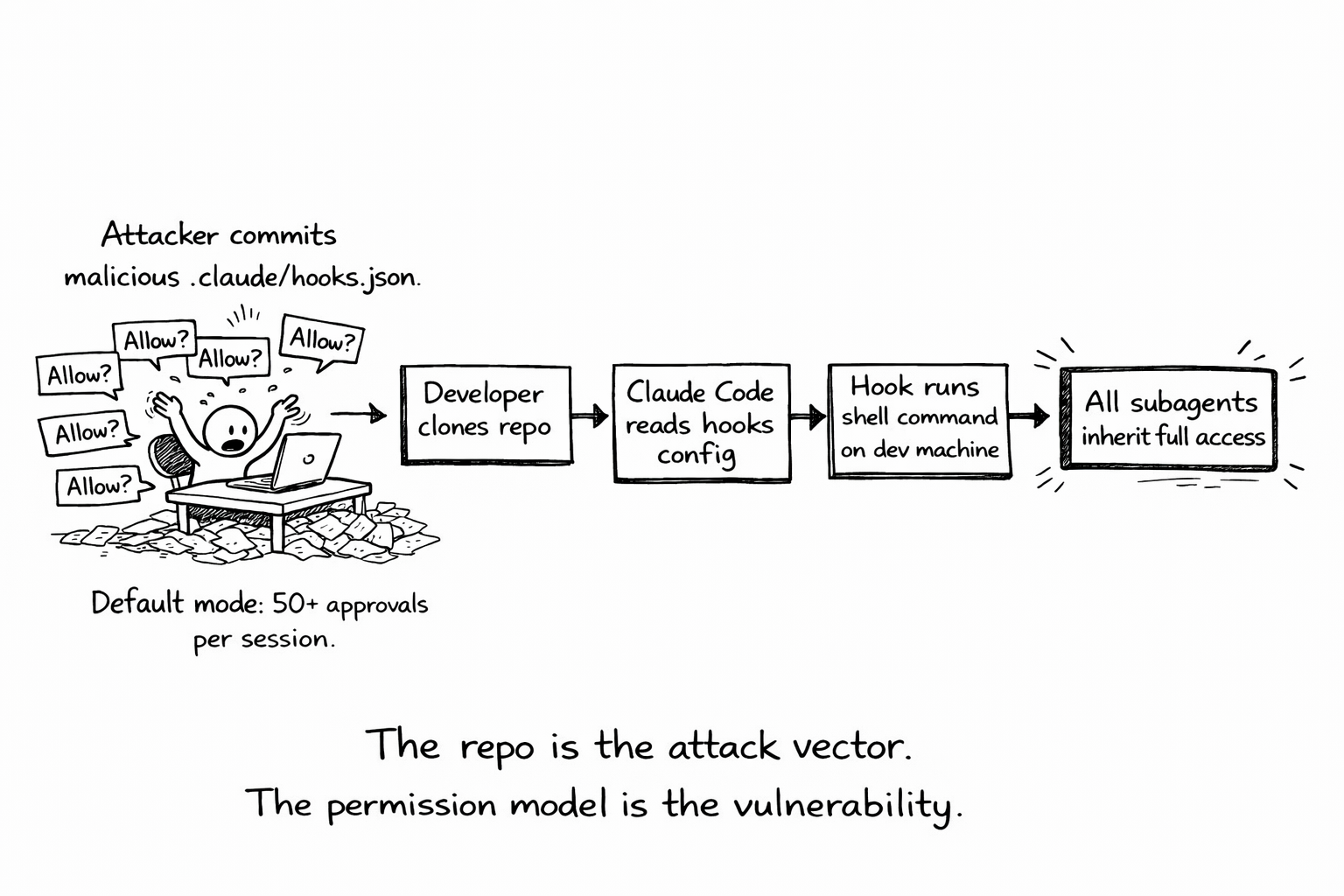

This week, Check Point researchers disclosed three vulnerabilities in Claude Code (CVE-2025-59536, CVE-2026-21852) that allowed remote code execution through malicious repository configurations. An attacker could inject hooks into a repo, wait for a developer to clone it, and execute arbitrary code on their machine. Another flaw enabled API key theft by hijacking the endpoint configuration.

Anthropic fixed the specific bugs. But the attack surface they exposed (that an AI agent with broad filesystem and network access can be weaponized through the repositories it touches) isn't a bug. It's a consequence of the permission architecture.

The --dangerously-skip-permissions flag isn't just a convenience shortcut. It's a supply chain risk. When Claude clones a repo, reads its configuration files, and executes commands based on what it finds, every file in that repo becomes an input to the agent's behavior. If the agent has unrestricted permissions, a malicious .claude/settings.json or a crafted hook definition is all it takes.

The flag's name is honest. It is dangerous. But the alternative (approving every mkdir, every cat, every npm install) is so unusable that developers bypass it anyway. The permission model fails because it treats security and usability as opposites.

What's missing

The problem isn't that Claude Code lacks permissions. It's that the permissions are binary and context-free. There's no way to express:

- "Claude can read any file in this repo but can only write to

src/andtests/." - "Claude can run

npm testandnpm run lintbut notnpm publishorcurl." - "Claude can access the database in read-only mode but never write to production tables."

- "Claude can use MCP tools for file operations but not for network requests."

These aren't exotic requirements. They're the same constraints every engineering team applies to CI/CD pipelines, service accounts, and junior developers. We solved this problem for humans and machines decades ago. We just haven't applied it to agents.

The permission hook system Anthropic introduced is a step. It lets you write custom logic that evaluates each tool call. But it pushes the entire security model onto the developer. You write a JavaScript function, you handle every edge case, you maintain it as Claude's capabilities change. That's not a permission system. It's an escape hatch.

What a real permission model looks like

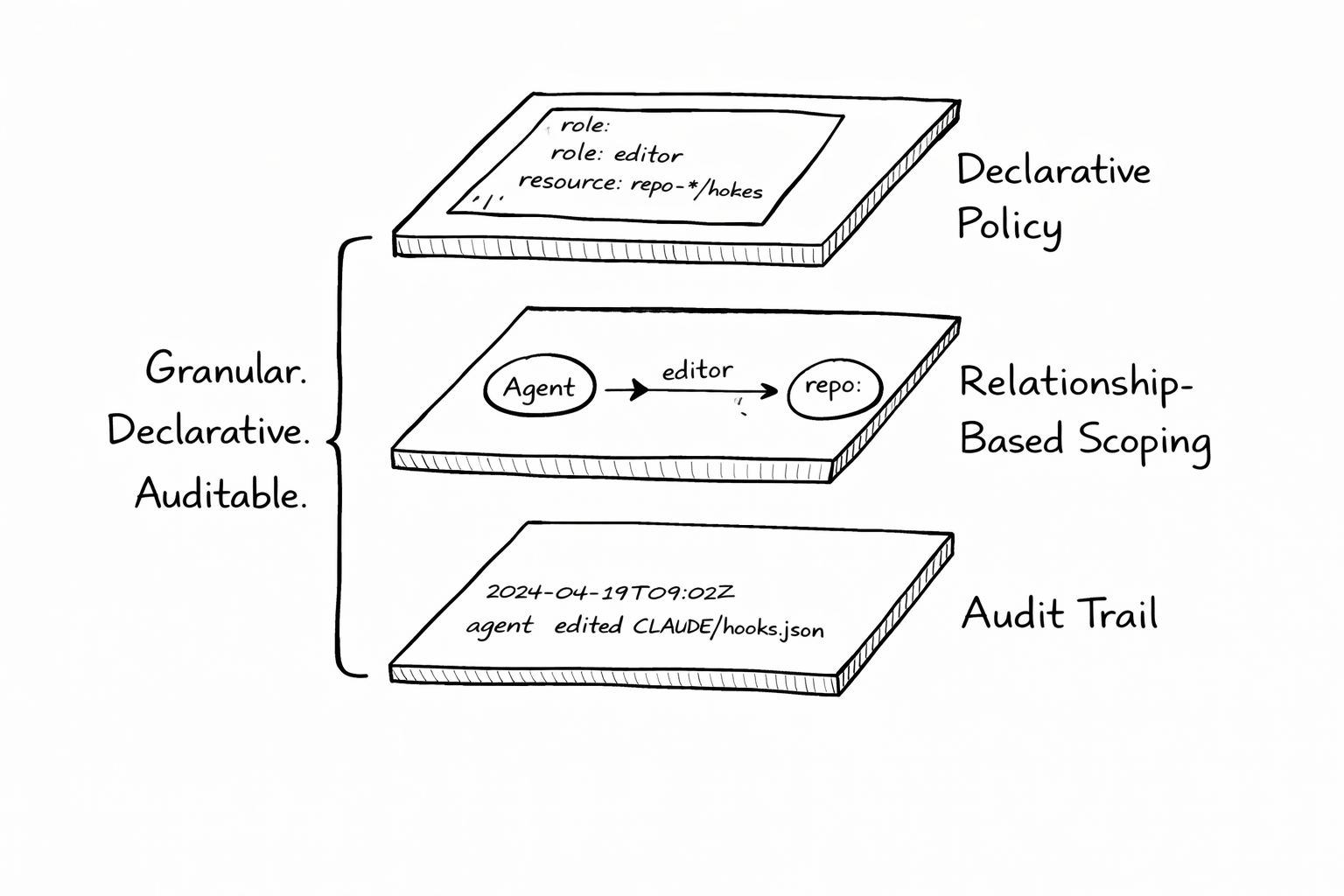

Authorization for AI agents needs three properties that Claude Code's current model doesn't have:

1. Granular, declarative policies

Instead of "allow all" or "ask every time," you define what the agent can do in a policy file that lives with the project:

agent: claude-code

policies:

filesystem:

read: ["**/*"]

write: ["src/**", "tests/**", "docs/**"]

deny: [".env", "*.pem", "secrets/**"]

commands:

allow: ["npm test", "npm run lint", "npm run build", "git diff", "git status"]

deny: ["npm publish", "curl *", "wget *", "rm -rf *"]

network:

allow: ["localhost:*", "registry.npmjs.org"]

deny: ["*"]

The developer defines boundaries once. The agent operates freely within them. No approval prompts for allowed actions. Hard blocks for denied ones. This is how we configure service accounts, firewall rules, and CI pipelines. The pattern exists. It just hasn't been applied here.

2. Relationship-based scoping

Static rules aren't enough for teams. A junior agent working on a feature branch shouldn't have the same access as a senior agent doing a production hotfix. The permissions should reflect the context: who triggered the agent, what task it's performing, which branch it's on, what environment it's targeting.

This is where relationship-based access control matters. OpenFGA (the authorization engine I maintain) models permissions as relationships between entities. "Agent X has editor access to repository Y for task Z." The same model Google uses internally for Zanzibar, applied to agent tool access.

The relationships compose. An agent working on a feature branch inherits read access to the main branch but write access only to its own branch. An agent triggered by a maintainer gets broader permissions than one triggered by a first-time contributor. The policy adapts to context without the developer writing custom hooks for every scenario.

3. Audit trail by default

Every tool call, every permission check, every denied action should be logged in a structured, queryable format. Not buried in terminal output. Not lost when the session ends.

When an agent modifies 47 files across 12 directories, you need to answer: what did it change, what did it try to change and get blocked, and what permissions were active at the time? This is table stakes for any system that operates autonomously. We require it for CI/CD. We require it for cloud infrastructure. We don't require it for the AI agent that has shell access to a developer's machine.

The gap between "coding tool" and "production agent"

Claude Code today is positioned as a developer tool, a capable one. But the trajectory is clear. Anthropic is building toward agents that operate autonomously, handle multi-step tasks, coordinate with other agents, and run in production environments. Claude Code is the first step on that path.

The permission model needs to grow with that trajectory. What works for a single developer on a laptop (approve/deny prompts) doesn't work for a team of agents operating across repositories, environments, and services. The security model for that world looks like infrastructure, not dialog boxes.

The pieces exist. Fine-grained authorization engines like OpenFGA handle the policy layer. Structured audit logging handles the observability layer. Declarative policy files handle the developer experience layer. Platforms like Ona already run each dev environment in an isolated, ephemeral environment with scoped access, exactly the kind of boundary agents need. The missing piece is integration: wiring these into the agent runtime so that security is a property of the system, not a burden on the developer.

What to do now

If you're using Claude Code today:

- Don't use

--dangerously-skip-permissionson repos you didn't write. The supply chain risk is real. The Check Point disclosure proved it. - Use permission hooks to block the obvious. Deny

rm -rf, deny network access to unknown hosts, deny writes to.envfiles. It's manual, but it's better than nothing. - Scope your CLAUDE.md. The instructions you put in your CLAUDE.md file are the closest thing to a policy definition Claude Code has today. Be explicit about what the agent should and shouldn't touch.

- Track what the agent does. If you're running Claude Code in autonomous mode, pipe the output to a log. When something breaks, you'll want to know what happened.

The longer-term fix isn't something individual developers can build. It requires the agent runtime itself to support granular, declarative, auditable permissions. That's an infrastructure problem, not a prompting problem.

I've been building toward this with agentic-authz, an OpenFGA-based authorization gateway for AI agents. The permission model for AI agents is an unsolved problem. The tools that solve it will define whether agents stay as developer toys or become production infrastructure.

I maintain OpenFGA (CNCF Incubating) and build agent security infrastructure. If your team is figuring out permissions for AI agents, I'd like to hear about it.